I just made a major speedup to the loading/next turn/new game code in Democracy 4, and thought I may as well share the gains with readers of this blog :D. It kind of makes me look a bit like an idiot to admit this is a big speedup (I should have known), but anyway, knowledge sharing (especially on optimization) is always good.

Fundamentally, the code in Democracy 4 is structured like a neural network. Without going into tons of details, every object in the game (policy, dilemma option, voter, voter group, situation…) is modeled as a neuron, which is basically just a named object connected by a ton of inputs and outputs to other neurons. You can run through the inputs and outputs, process the values and get a current value for a neuron at any time, which is done for every one of them, every turn.

Also… when we start a new game, I need ‘historical’ data for each value, so the game pre-processes the whole simulation about 30 times before you start to give us meaningful background data, and to ensure the current simulation sits as a reasonable equilibrium.

Those connections to neurons should probably be called dendrites or whatever, but I call them SIM_NeuralEffect. They contain basically the names of a host and a target (resolved to actual C++ pointers to objects), and an equation explaining the connection, and some other housekeeping stuff.

At the heart of it all, is an equation processor which lets you write this:

OilPrice,0+(0.22*x)*GDP

And actually turn it into a value for that effect, given the current situation. The Equation processor runs each turn, on every neural effect, and there are LOTS of them. Thus, if the equation processor is slow, its all slow.

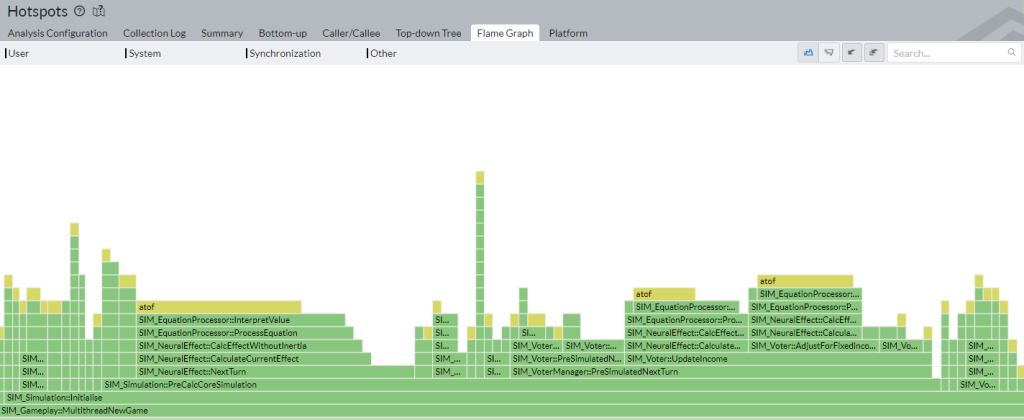

I just installed a new version of the free vtune profiler from intel. Its not recognizing my ultra-amazing new chip, so only doing usermode sampling, but nonetheless it draws pretty flame charts like this:

Before I optimised

Before I optimised

This is showing the code inside that 30-turn pre-game processing called PreCalcCoreSimulation. Lots of stuff goes on, but what I immediately noticed was all this atof stuff. Omgz. Thats a low level c runtime function, not one of mine, and it seems to be slowing down everything. This is a HUGE chunk of the whole equation processing code. How is this possible?

Now, you may think ‘dude, atof is pretty standard. No way are you are going to be able to make that code faster’, to which I reply ‘dude, obviously not. But the fastest code is code that never runs.’.

All those calls to atof are absolute nonsense.

Looking back at the equation above (OilPrice,0+(0.22*x)*GDP) there is obviously some stuff in there which is volatile. I do not know what the current value of x or GDP is, so I will need to grab their pointers and query them when I process the equation, but the rest of that stuff is static. That * is going to remain * and that 0 and 0.22 will remain fixed too. This is the key to a roughly 33% speedup of the whole processing in the game.

I actually did know to look into this, and I do not do manual text parsing of the equation each time. I parse them equation on startup, and stick the various values into buckets, so I am not wasting time each turn. But one thing I had not done is store the atof() outcomes. I was still storing variables[0] as ‘0’ instead of just 0.

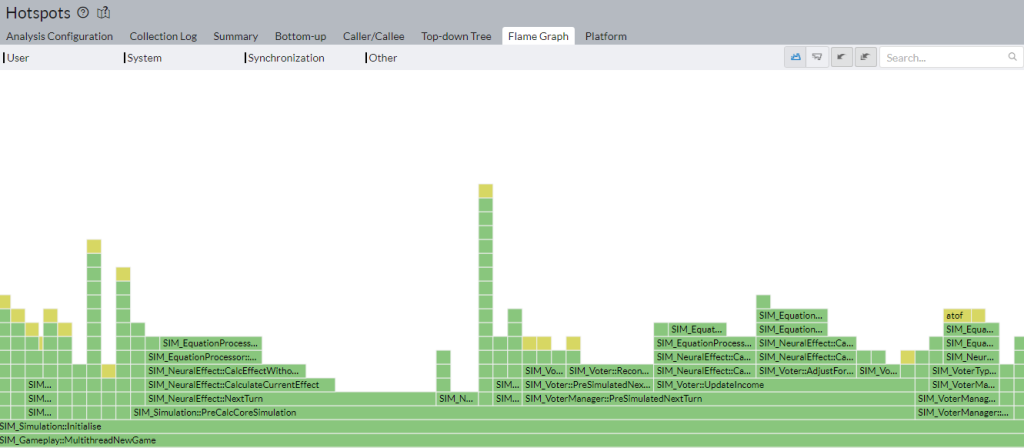

Now you may think atof is fast. Its fast enough for most cases, but its WAY slower than just accessing the value of a floating point number thats already in RAM, and cached happily in the equation processor itself. Here is the new diagram:

Faster!

Faster!

The difference is, (on my superfast PC), for the whole precalc simulation function: 0.85 vs 1.50 seconds. This probably makes me sound pedantic as hell, but I’m rocking some stupidly new and pricey PC, so there are likely people playing D4 on laptops a fifth the speed. I might be knocking a whole 3 seconds off the new game time for some players!

Also, and worth remembering, I just saved doing a ton of processing, which means a ton of CPU time, power and heat. If you can make your game run more quietly, more coolly, and faster on players PCs, you absolutely should do it.