Before I even start this post, I should point out that currently the project I am coding is for fun. I actually really ENJOY complex programming design challenges, unlike many people, so the comment ‘this is done for you in X’ or ‘there is middleware that solves this without you doing anything in Y’ is irrelevant to me, and actually depressing. Whatever happened to the joy of working on hard problems?

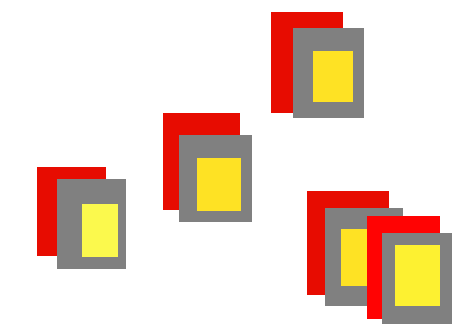

Take an imaginary game that has a lot of entities in it. Say 500. Each entity is a sprite. That sprite has an effect drawn on top of it (say its a glowy light) and a different effect below it (say its another glowy light). The objects have to be rendered in a sensible way, so that if one object passes over another (for example) the glowy lights ‘stack’ properly, and you don’t see aberrations where the lights from something are seen through something else. Here is a visualisation:

In this case red is the ‘underglow’ grey is the sprite, yellow is the overglow. The bottom right duo shows the issue where things have to be rendered correctly. In a 3D game with complex meshes and no antialiasing, you just use a zbuffer to draw all this stuff, and forget about it, but if you are using sprites with nice fuzzy edges (not horrible jaggy pixels, you really need to use the classic ‘painters algorithm’ of drawing from back to front for each object. There are clear performance problems:

A naïve way to code this would be my current system which handles each object in 3 draw calls. So we set up the blend mode for the underglow, and draw that, then set a normal blend mode, draw the sprite, then a glowy blend mode to draw the top glow, then repeat for every other entity. The trouble is, with 500 entities, thats 1,500 draw calls simply to draw the most simple part of the game…

Unfortunately this only scratches the surface because there may also be other layers of things that need drawing on top of each entity, and between drawing each sprite. However there is a major optimisation to be had…. <drumroll>. Actually my game really works like this:

Where there is a grid, and <generally speaking> the entities stay in one grid box at a time. WITHIN that grid box, all sorts of stuff may happen, but items in the top left grid box will not suddenly appear in the bottom right grid box. In other words I can make some clever assumptions, if only I can find a way to generalize them into an algorithm.

For example I could draw the underglow in one object in each grid box all together in a single draw call like this:

So this is suddenly one draw call instead of 8. I then do the same for the sprites, then the overglow, and then do the other object in each grid square as a second pass (basically a second Z layer. Assuming 8 grid squares, 2 per square, 3 passes, thats 48 draw calls for naïve method and 6 for the new method. Pretty awesome! However, thats oversimplifying things. Firstly in some cases there are 16 entities in each grid, in others just 1. Secondly, in some cases items ‘spill’ over into the next grid square, so I need to ensure I do not get an anomaly where 2 objects would overlap just slightly and thus z-fight when drawn…

Like everything in game coding, the simple code design always accelerates towards spaghetti, but I would like to do my best to avoid that if possible…

In order to decide what is drawn at what time, I basically need 2 pieces of metadata about each object. Item 1 is what grid square it is in, and item 2 is the Z value of that object, where I can cope with say 16 different Z ‘bands’ for the whole world, meaning a group of 16 entities in a square will definitely be drawn with 16 different draw calls, and thus be painter-algorithm-ed ok.

I also want to do this without having to do insane amounts of sorting and processing every frame, for obvious efficiency reasons…

So my first thoughts on this are to handle everything via Z sorting, and make the list of entities the de-facto Z sort. In other words, when I build up the initial list of entities at the start of the game, I have pre-sorted them into z order. So instead of a single list of randomly jumbled Z orders. I get this situation, where the black -number is the Z position, and the blue references the grid square:

So my now pre-sorted object list goes like this:

A1 B1 C1 D1 E1 F1 G1 H1 A2 C2 F2 etc..

I can then do batches of draw class so my actual code looks like this

- DrawUnderGlowFor1()

- DrawSpritesFor1()

- DrawOverglowFor1()

The nature of the game means that generally speaking, Z order can be fixed from the start and never change, which simplifies things hugely. The bit where it becomes a real pain is the overlap of objects to adjacent grid squares. I would also like a super-generic system that can handle not just these fixed entities, but anything else in the game. What I really need is a two layered graphics engine:

Layer 1 just send out a ton of draw calls, probably with some sort of metadata. This is like a draw call, plus relevant texture, plus blend state for the object. This is done in a naïve way with no thought to anything else.

Layer 2 processes layer 1, does some analysis and works out how to collapse draw calls. It can calculate bounding boxes for everything that is drawn, see what overlaps with what, and what can be batched, and then decides on a final ‘optimised’ series of calls to directx. This is done before any textures get set or draw calls are made. It then hands all this off to ideally a new thread, which just streams those draw calls to directx, while the other threads get on with composing the next frame.

I have attempted such a system once before in the past, but I was a less experienced coder then, and full-time working, and on a (self-imposed) deadline to ship the game, rather than obsess over noodling with rendering engine design. So hopefully this time, I will crack it, and then have something super cool that can handle whatever I throw at it :D. Also the beauty of this design is it would be easy to bypass stage 2 and just render everything as it comes in, so I could even hook up a hotkey to watch the frame rate difference and check I’m not wasting all ny time :D.