No, I’m not talking about mine, but about *other peoples code* that I encounter on a day to day basis. Some choice examples:

When I start aqtime ( a profiling app, ironically), it hangs for about 10 seconds. then when I load a project (the file is under 100k) it hangs for another ten seconds.

There is no discernible network activity during this time, and the CPU is not thrashed either. How is this even POSSIBLE? A quick check shows that my i7 3600 can do 106 trillion instructions per second. (106,000 MIPS). Thats insane. It also means that in this ten seconds, it can do one thousand trillion instructions. To do seemingly…nothing

Also… for my sins I own a Samsung smart TV. When I start up that pile of crap, if often will not respond to remote buttons for about eight seconds, and even then, it queues them up, and can lag processing an instruction by two or three seconds. This TV has all eco options disabled (much though that pains me), and has all options regarding uploading viewing data disabled. Lets assume its CPU runs at a mere 1% of the speed of my i7, that means it has to get buy on a mere 10 trillion operations per second. My god, no wonder its slow, as I’m quite sure it takes a billion instructions just to change channels right? (even if it did, it could respond in 1/10,000th of a second)

These smiling fools would be less chirpy if they knew how badly coded the interface was

These smiling fools would be less chirpy if they knew how badly coded the interface was

I just launched HTMLTools, some software I use to edit web pages, and it took 15 seconds to load up an present an empty document. fifteen seconds, on an i7 doing absolutely nothing of any consequence.

Why the hell do we tolerate this mess? why have we allowed coders to get away with producing such awful, horrible, bloated work that doesn’t even come close to running at 1% of its potential speed and efficiency.

In any other realm this would be a source of huge anger, embarrassment and public shaming. My car can theoretically do about 150mph. imagine buying such a car and then realizing that due to bloated software, it can only actually manage 0.1 miles per hour. Imagine turning on a 2,000watt Oven, and only getting 2 of those watts used to actually heat something. We would go absolutely bonkers.

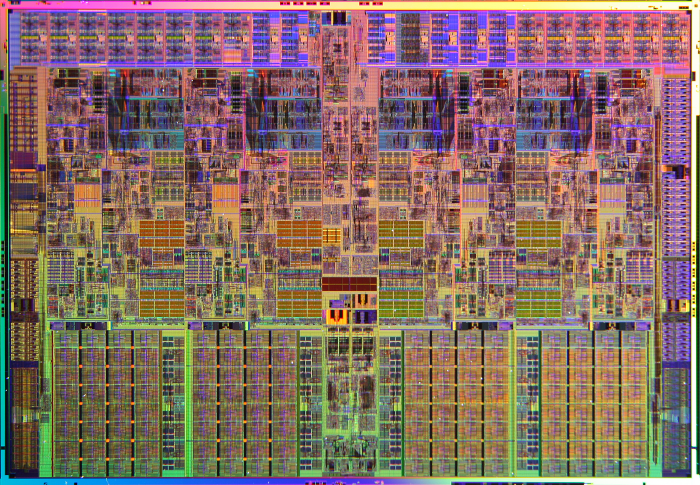

an intel i7. So much capability, so little proper usage.

an intel i7. So much capability, so little proper usage.

There is a VAST discrepancy between the amount of time it takes optimized computer code to do a thing, and the amount of time the average consumer/player/citizen thinks it will take. We need to educate people that when they launch an app and it is not 100% super-responsive, that this is because it is badly, shoddily, terribly made.

Who can we blame? well maybe the people who make operating systems for one, as they are often bloated beyond belief. I use my smartphone a fair bit but not THAT much, and when it gets an update and tells me its ‘optimizing’ (yeah right) 237 apps… I ask myself what the hell is all of this crap, because i’m sure I didn’t install it. When the O/S is already a bloated piece of tortoise-ware, how can we really expect app developers to do any better.

I think a better source of blame is people who write ‘learn C++ in 7 days’ style books, who peddle this false bullshit that you can master something as complex as a computer language in less time than it takes to binge watch a TV series. Even worse is the whole raft of middleware which is pushed onto people who think nothing of plugging in some code that does god-knows-what, simply to avoid writing a few dozen lines of code themselves.

We need to push back against this stuff. We need to take a bottom-up approach where we start with what our apps/operating systems/appliances really NEED to do, and then try to create the fastest possible environment for this to happen. The only recent example of seen of this is the designing of a dedicated self-driving computer chip for tesla cars. very long explanation below: (chip stats about 7min 30 in)