I’m new to multithreading, and the visual studio concurrency visualiser. I have lovely multithreading code working. basically my main thread throws the Threadmanager a bunch of tasks. The threadmanager has a bunch of threads which all spin doing nothing until told to do a task. Once they finished their task, they set an IDLE flag, and the main threads WaitForAllTasks function then assigns them a new one. It works just fine…. but I notice an anomaly.

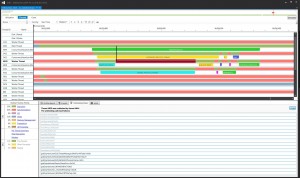

See below (Click to enlarge enormously)

The highlighted connection shows thread 4828 sat on its fat ass waiting for the main thread, when it clearly idle and ready to do stuff. The things is, the main thread function is just this:

bool bfinished = false;

while(!bfinished)

{

bfinished = true;

for(int t = 0; t < MAX_THREADS; t++)

{

if(CurrentTasks[t] == IDLE_TASK)

{

//maybe get a queued item

if(!QueuedTasks.empty())

{

THREAD_TASK next_task = QueuedTasks.front();

QueuedTasks.pop_front();

CurrentTasks[t] = next_task;

SetEvent(StartEvent[t]);

}

}

else

{

bfinished = false;

}

}

}

Which basically does sod all. How can this take any time? Setting event sets an event which the worker threads function is calling WaitForSingleObject() on. Again…how can this take any time? Is there some polling delay in WaitForSingleObject? Is this the best I can hope for? It’s the same case for all those delays, it’s just this one is the largest. I’m new to this. Any ideas? :D