very techy post…

My latest game uses the same multi-threading code I’ve used before. This is C++ code under windows, on windows 10, using Visual Studio 2013 update 5. My multithreading code basically does this:

Create 8 threads (if an 8 core system). Create 8 events which are tracked by the 8 threads. At the start of the app all of the threads are running, all of the events are unset. As I get to a point where I wwant to doi some multithreaded code, i add tasks to my thread manager, and when I add them, I see which of my threads does not have a task scheduled, and I set that event:

PCurrentTasks[t] = pitem;

SetEvent(StartEvent[t]);

Thats done inside a CriticalSection, which I initialized with a spincount of 0 (although a 2,000 spiun count makes no difference).

Each of the threads has a threadprocess thats essentially this:

WaitForSingleObject(SIM_GetThreadManager()->StartEvent[thread], INFINITE);

When I get past that, I enter the critical section, grab the task assigned to me, do the actual thread work, then I immediately check to see if there is another task (again, this happens in a critical section). if so…I process that task, if not, I’m back in my loop of WaitForSingleObject().

Everything works just fine… BUT!

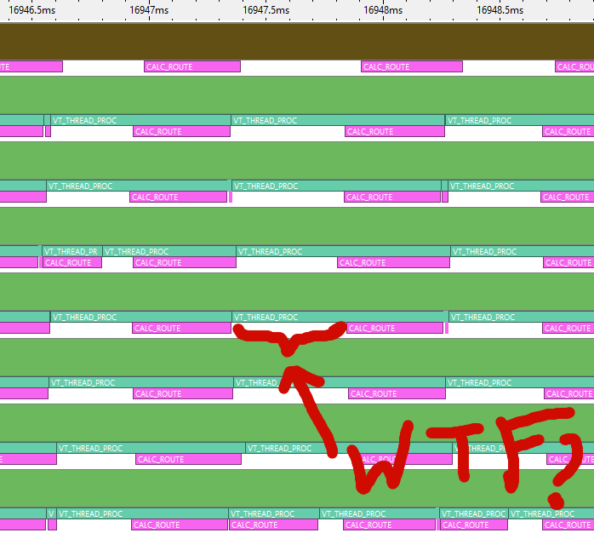

If I have a big queue of things to do, my concurrency charts from vtune show stuff like this:

Seriously, WTF is happening there? Those gaps are huge, according to the scale they are maybe 0.2ms long. What the hell is taking so long? Is there some fundamental problem with how I am multithreading using events? Does SetEvent() take a billion years to actually process? If feels like my code should be way more bunched up than it is.